Seminars

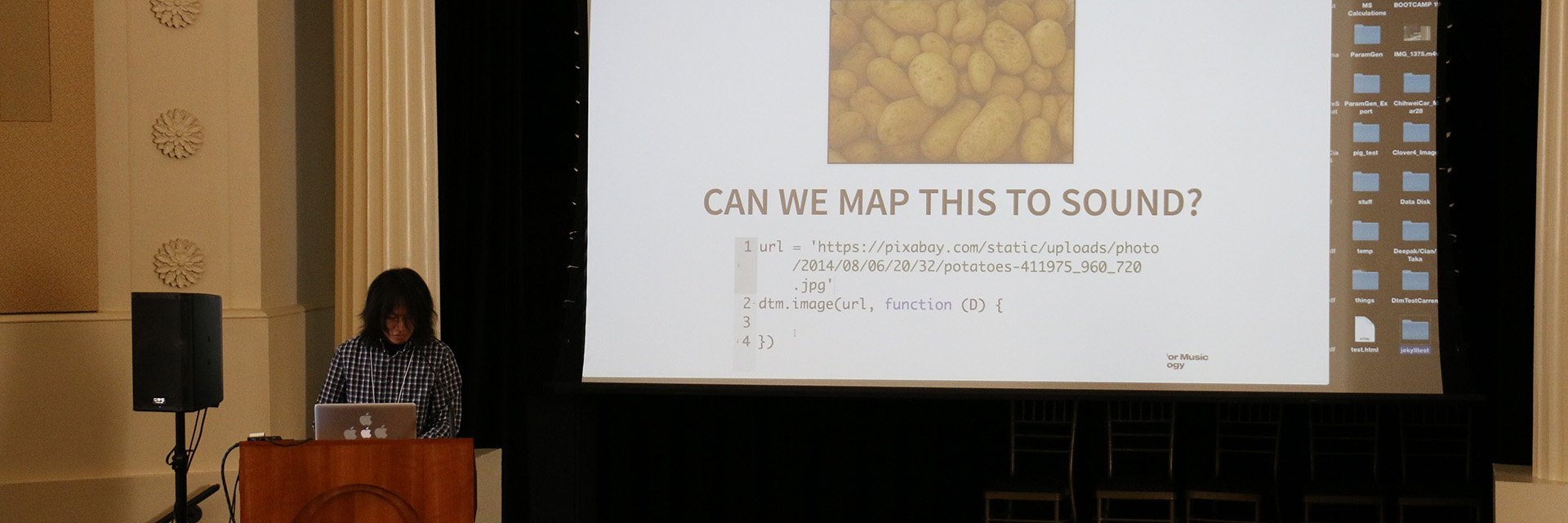

The Georgia Tech Center for Music Technology Seminar Series features both invited speakers as well as student project presentations. The seminars are on Mondays from 1:55 - 2:45 p.m. in the West Village Dining Commons, Room 175, on Georgia Tech's campus and are open to the public.

Spring 2024 Seminars

January 8 – Canceled

January 15 – MLK day

January 22 – Marc Downie

January 29 – Mason Bretan

February 5 – Raghav Sankaranarayanan

February 12 – Bob Strum (Virtual)

February 19 – Craig Veer

February 26 – Thomas Dziwis

March 4 – Yilong Tang, Jingru Li, Joey Steel

March 11 – Walker Ripken, Jeffres Mir, Shan Jiang

March 18 – Spring Break

March 25 – Xu Jingyan, Sun Qianyi, Murray Evan

April 1 – Gao Xuedan, Pargai Dhruv, Allen Brittany

April 8 – Yu Yifeng, Leinwander Danielle, Cash Nicolette

April 15 – Ding Yiwei, Liu Shimiao, Seshadri Pavan

April 22 – Anna Huang